|

Implementing CNPs was a good exercise for me to learn more about Tensorflow. This problem disappears if we enforce a positive lower bound on the output variance. I only encountered one minor issue: When training the CNP, I sometimes find that it outputs NaN values. provide enough details about the implementation, so it was straightforward to reproduce their work. Opinion, and what I have learnedĬNPs and their generalizations promise great potential, as they alleviate the curse of dimensionality of Gaussian processes and have already shown to be powerful tools in the domain of computer vision. Garnelo et al.’s results look much nicer than mine, but my representation vector was only half the size of the one they used and they probably also spent more resources on training the CNP. Since they scale linearly with the number of sample points, and since they can learn to parametrize any stochastic process, we can also conceive the set of all possible handwritten digit images as samples from a stochastic process and use a CNP to learn them.Īfter just 4.8\times10^5 training episodes, the same CNP that I used for 1-D regression above, has learned to predict the shapes of handwritten digits, given a few context pixels: Now comes the really cool thing about CNPs. Of course, a GP with the same kernel as the GP that the ground truth function was sampled from performs better:īut this is kind of an unfair comparison, since the CNP had to “learn the kernel function” and we did not spend much time on training. In contrast to a GP, however, the CNP does not predict exactly the context points, even though they are given. When more points are given, the prediction improves and the uncertainty decreases. Notice that the CNP is less certain in regions far away from the given context points (see left panel around x \approx 0.75). as well as 100 target points on the interval that constitute the graph. For this example, the CNP is provided with the context points indicated by red crosses. In the plot above, the gray line is the mean function that the CNP predicts, and the blue band is the predicted variance. After only 10^5 episodes of training, the CNP already performs quite well: Please refer to my GitHub repository for updates.Īs a first example, we generate functions from a GP with a squared-exponential kernel and train a CNP to predict these functions from a set of context points.

I plan to add more results and a generalization to NPs at a later stage. I reproduced two of the application examples that Garnelo et al. We want to predict the values \boldsymbol. If you just want to know what you can do with CNPs, feel free to skip ahead to the next section, but a little bit of mathematical background can’t hurt □Ĭonsider the following scenario. As a first step, here I reproduce some of Garnelo et al.’s work on conditional neural processes (CNPs), which are the precursors of NPs.

Thus, I aim to build a Python package that lets the user implement NPs and all their variations with a minimal amount of code. Neural processes should come in handy for several parts of my Rubik’s Cube project. A well-known special case of an NP is the generative query network (GQN) that has been invented to predict 3D scenes from unobserved viewpoints.

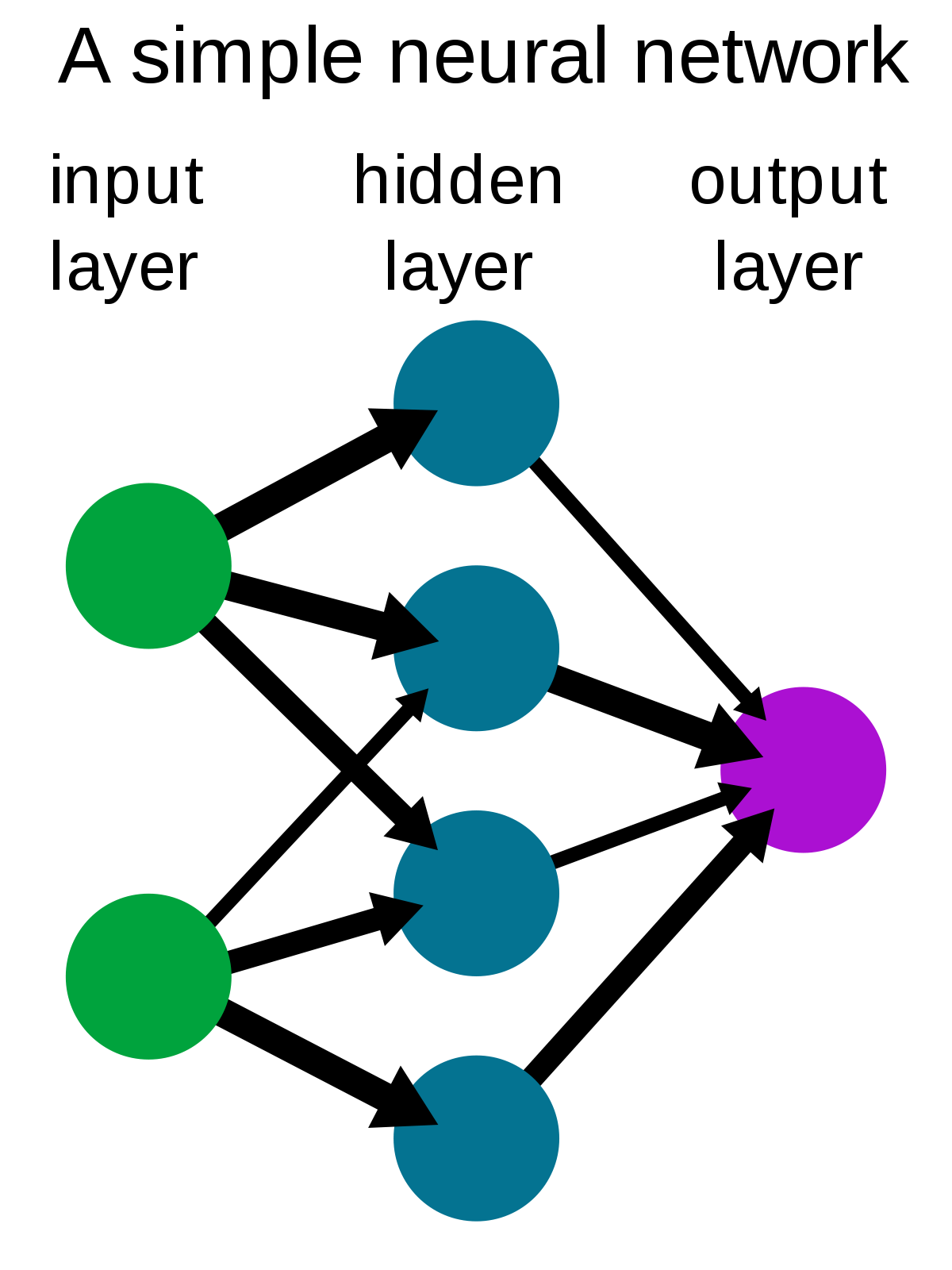

But in contrast to GPs, NPs scale linearly with the number of data points (GPs typically scale cubically ). In particular, similar to GPs, NPs learn distributions over functions and predict their uncertainty about the predicted function values. In my own wordsĪ neural process (NP) is a novel framework for regression and classification tasks that combines the strengths of neural networks (NNs) and Gaussian processes (GPs). To understand this post, you need to have a basic understanding of neural networks and Gaussian processes. This week’s article is “Conditional Neural Processes” by Garnelo et al.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed